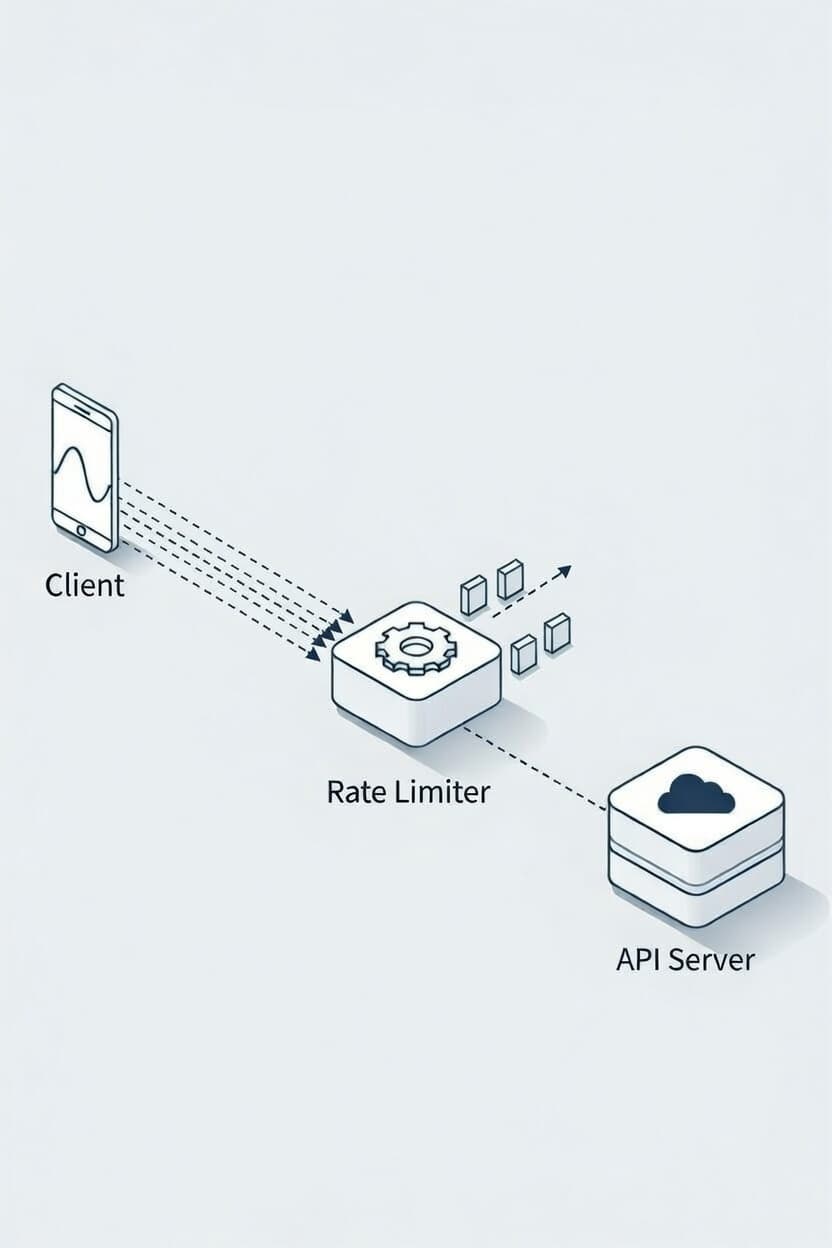

Rate Limiter

A Rate Limiter is a mechanism used to control the rate of incoming requests to a server or API. This blog post discusses the importance of rate limiting, common strategies, and implementation techniques.

Rate limiting is crucial for several reasons:

The choice of rate limiting strategy depends on the specific requirements of the application, including:

| Strategy | Pros | Cons | Most common real-world use case |

|---|---|---|---|

| Fixed Window Counter | Simple to implement | Can lead to bursts of traffic at window boundaries | Simple quotas (100/day, 5000/month) |

| Sliding Window Log | More granular control | Higher memory usage | Most Fair, Precise per-user limiting |

| Sliding Window Counter | Balances control and memory usage | Requires careful weighted calculation | Good Balance between Fixed and Sliding Window Log |

| Token Bucket | Flexible and allows bursts | More complex to implement | Most popular for APIs (Stripe, AWS, Google,...) |

| Leaky Bucket | Smooths out traffic spikes | Can introduce latency | Traffic shaping, queues, constant-rate systems |

In simple terms, Token Bucket is the most popular strategy used in real-world applications due to its flexibility and ability to handle bursts of traffic effectively. Fixed Window Counter is often used for simple quota systems, while Sliding Window Log provides the most fairness at the cost of higher memory usage. in Sliing window Counter offers a balanced approach between control and resource consumption. Leaky Bucket is ideal for scenarios requiring smooth traffic flow.

Rate limiting can be implemented using various techniques, including:

Let's implement a Token Bucket rate limiter in an Express.js application using TypeScript. Token Bucket is one of the most popular rate limiting strategies. Below is a simple implementation of a Token Bucket rate limiter.

Token Bucket Class:

export interface TokenBucketOptions { capacity: number; // max tokens refillRate: number; // tokens per second } export class TokenBucket { private capacity: number; private tokens: number; private refillRate: number; private lastRefill: number; constructor(options: TokenBucketOptions) { this.capacity = options.capacity; this.tokens = options.capacity; this.refillRate = options.refillRate; this.lastRefill = Date.now(); } private refill() { const now = Date.now(); const elapsedSeconds = (now - this.lastRefill) / 1000; const refillTokens = elapsedSeconds * this.refillRate; this.tokens = Math.min(this.capacity, this.tokens + refillTokens); this.lastRefill = now; } consume(tokens: number = 1): boolean { this.refill(); if (this.tokens >= tokens) { this.tokens -= tokens; return true; } return false; } getTokens() { this.refill(); return this.tokens; } }

Rate Limiter Class:

import { TokenBucket } from "./token-bucket"; const buckets = new Map<string, TokenBucket>(); //it can be in redis interface RateLimitConfig { capacity: number; refillRate: number; } export class RateLimiter { constructor(private config: RateLimitConfig) {} private getBucket(key: string): TokenBucket { if (!buckets.has(key)) { buckets.set( key, new TokenBucket({ capacity: this.config.capacity, refillRate: this.config.refillRate, }), ); } return buckets.get(key)!; } allowRequest(key: string): boolean { const bucket = this.getBucket(key); return bucket.consume(1); } }

Middleware for Express.js:

import { Request, Response, NextFunction } from "express"; import { RateLimiter } from "./rate-limiter"; const limiter = new RateLimiter({ capacity: 10, // max 10 requests refillRate: 2, // 2 requests per second }); export function rateLimiterMiddleware( req: Request, res: Response, next: NextFunction ) { const key: string = req.ip ?? ""; // or userId, apiKey, etc. if (!limiter.allowRequest(key)) { return res.status(429).json({ message: "Too Many Requests", }); } next(); }

Example of using the middleware in an Express.js application:

import express from "express"; import { rateLimiterMiddleware } from "./middleware"; const app = express(); app.use(rateLimiterMiddleware); app.get("/", (req, res) => { res.send("Hello, rate-limited world!"); }); app.listen(3000, () => { console.log("Server running on http://localhost:3000"); });

If you want to see the full implementation with redis, you can check out the this sample repository: Source Code

Rate limiting is an essential technique for managing traffic and ensuring the stability and security of web applications. By understanding the different strategies and implementation techniques, developers can choose the most appropriate approach for their specific use case. The Token Bucket strategy is widely used in real-world applications due to its flexibility and effectiveness in handling bursts of traffic. Implementing rate limiting can help protect your application from abuse, ensure fair usage, and improve overall performance.